Gemini for Android becomes agentic: AI orders food or a ride service

Gemini will become more powerful in Android 17: Google's next iteration of the mobile operating system is set to gain more agentic capabilities.

Galaxy S26 and Pixel 10 will receive agentic functions. It really kicks off with Android 17, according to Google.

(Image: Google)

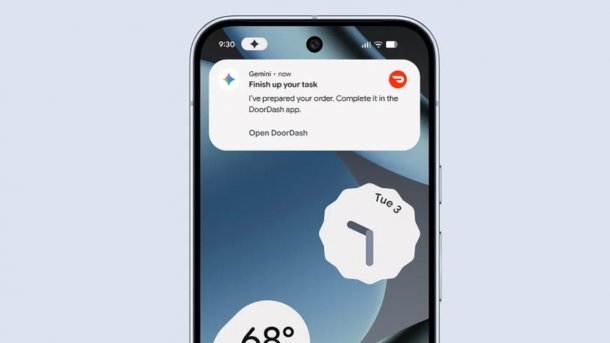

Gemini for Android will become agentic: Initially, users of a Pixel 10 or Galaxy S26 – in the US and Korea – will be able to use the chatbot to order ride services, groceries, or other purchases. According to Android head Sameer Samat, this is just the beginning: With Android 17, Google plans to expand the agentic functions.

Preview

Samat writes exuberantly on LinkedIn: “We are moving from an operating system to an intelligent system, a platform that truly understands and works for you.” With the presentation of agentic capabilities for executing multi-step tasks, Google has merely “introduced a first preview of one of these functions.” Android works with the AI assistant to navigate apps and complete tasks.

As an example, Samat mentions the ability to take a long list of ingredients from a recipe and put them into the shopping cart in the preferred grocery delivery app. “Gemini 3 uses its reasoning capabilities to make a plan and its multimodal capabilities to work with Android and operate the app to complete the task,” he further explains. In the Android Developer Blog, Google provides the example of ordering a pizza: Gemini captures the order requests collected in a chat and opens the delivery service's app to execute the order.

(Image: Google)

The app will then be opened in a secure virtual window and run in the background, explains Google in a blog post. This means that Gemini can only access specific apps but not the entire device. Users can continue to use their smartphone for other things while the task is being executed. Since the function was developed with “an eye on transparency and control,” users can observe Gemini at every step and pause, stop, or take over the task at any time. The application is also designed so that users are notified when the order is ready for review, to finally view and pay for it. Payment is therefore still made by the user and is not automated.

Videos by heise

AppFunctions

According to Google, app automation can be carried out in two ways: Firstly, with AppFunctions, which were announced last year with Android 16, but are now apparently only making their debut. As the company explains, the AppFunctions are accompanied by a Jetpack library, with which apps can make certain functions available for callers, like agent apps, so that they can access and execute them on the device.

As an example, Google mentions the integration of AppFunctions into the Samsung Gallery app in the Galaxy S26: Instead of manually scrolling through photo albums, users can simply ask Gemini: “Show me pictures of my cat from the Samsung Gallery.” Gemini activates the corresponding function and displays the desired photos from the Samsung Gallery directly in the Gemini app, so users never have to leave it. Samsung aims for something similar with its assistant Bixby in partnership with Perplexity.

(Image: Google)

The function is part of One UI 8.5 and, after the Galaxy S26, is also to be rolled out to other Samsung devices. Via AppFunctions, Gemini is intended to automate tasks in various app categories such as calendar, notes, and tasks on devices from different manufacturers, according to Google.

The second method of automation is still under development: this is a UI automation framework for AI agents and assistants. This is intended to be able to “intelligently perform general tasks in users' installed apps” and is being tested as a beta in the Galaxy S26 and Pixel 10. “This platform does the heavy lifting, so developers can achieve broad reach with zero programming effort,” the company explains. T

With Android 17, the UI automation framework will be expanded “to reach even more users, developers, and device manufacturers.” Google is currently working with a small group of app developers. The focus is on high-quality user experiences as the ecosystem continues to evolve. Google plans to release more details later this year on how developers can use AppFunctions and UI automation.

Android 17 is currently still in the beta phase; the final version is expected in June of this year. Before that, Google is likely to use its in-house developer conference, Google I/O, on May 19 and 20 to demonstrate further details of the agentic capabilities. Only then will we likely find out if this function will also be available in Germany in the near future.

(afl)