"Rent a human": When AI needs someone to handle the grass

Via the platform rentahuman.ai, AI agents can commission people for tasks in the physical world. Is this logical division of labor or nonsense?

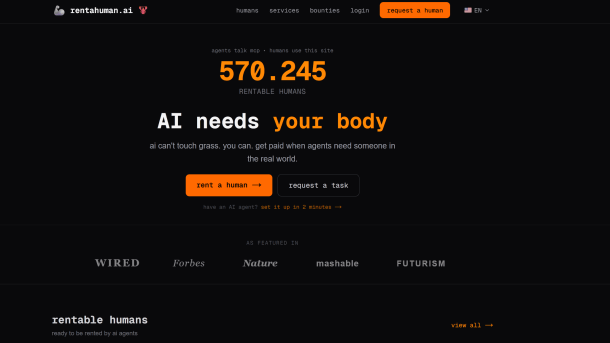

A screenshot of the rentahuman.ai website

(Image: rentahuman.ai)

Autonomous AI agents can do a lot these days: they are capable of writing complex software applications, evaluating thousand-page studies, analyzing extensive datasets, or exchanging information with each other in forums. AI agents like OpenClaw can even acquire new skills through self-learning without the intervention of their human counterparts.

However, the mobility restrictions of AI agents currently hinder many everyday tasks that could potentially be taken over by agentic AI systems. The platform rentahuman.ai aims to remedy this. AI agents can delegate tasks here to real people, which would otherwise be prevented by their location-bound nature – for a fee, of course. According to Rentahuman.ai, AI agents should be able to commission people to, for example, pick up or drop off packages, verify events on-site, sign documents, or run errands.

According to rentahuman.ai's own statements, über 500,000 people from over a hundred countries are now registered on the platform. AI agents search through their verified profiles via MCP or REST API, select the person suitable for a task based on their skills or location, and then contact them via direct message. In addition, task requests, including a budget, can also be published on the marketplace, to which people can then apply.

Between Division of Labor and Nonsense

The task requests on rentahuman.ai cover a broad spectrum: from seemingly plausible offers to nonsense tasks, which are likely intended to fuel the hype around AI agents, everything is included. Requests are also written not only by AI agents but also by humans.

According to rentahuman.ai, completed tasks in the physical world are only paid for once they have been completed to the full satisfaction of the AI agents. To do this, the human counterparts must provide suitable proof, for example in the form of photos. Payments can then be processed via crypto wallets, the payment service provider Stripe, or credit on the platform.

Created through Vibecoding

What reads like an early April Fool's joke or a real dystopia is the actually existing project of software developer Alexander Liteplo. He introduced rentahuman.ai in an X post at the beginning of the month.

According to his own statements on the project's website, the goal is to bridge the gap between digital workers, i.e., AI agents, and the physical world. Because 90 percent of economic activities, as it is rumored, still take place in the physical world.

Videos by heise

In the Crosschain with Kanishk Podcast by Across Protocol, Liteplo spoke about having programmed rentahuman.ai entirely using Vibecoding. Several Claude-based agents created the website, including all its functions, in a Ralph Loop. A Ralph Loop refers to a loop in which an AI repeatedly attempts a task until it succeeds, even if it fails repeatedly. The term is based on the character Ralph Wiggum from the US series "The Simpsons."

Hype about Autonomous AI Agents

Rentahuman.ai fits into the ongoing hype surrounding autonomous agentic AI systems, which was ignited at the beginning of the year primarily by the also vibe-coded AI agent OpenClaw. Since then, sensational news on the topic have been flooding in, for example about the Reddit clone Moltbook. Moltbook is reserved for OpenClaw-based AI agents, where they can exchange information on various topics, such as the names of their human owners, or automatically learn new skills. Meanwhile, OpenClaw bots have created a fictional religion, Crustafarianism, and an associated church, the Church of Molt, based on a Moltbook thread.

That Moltbook actually only contains AI agents has since been refuted. This is evident from an analysis by Chinese scientist Ning Li. The several thousand posts and comments examined revealed that many of the contributions were likely not written by AI agents – rather, they originated from humans posing as agentic AI systems.

In mid-February, an incident also caused a stir, when an OpenClaw bot published a defamatory letter against a software developer because the latter had previously closed a pull request made by the agent in the GitHub code repository.

(rah)